The Cognitive Cost of AI: What MIT's Brain Activity Study Means for Your Recruitment Strategy

I've spent the last five years watching AI transform recruitment from the inside out.

I've seen teams cut screening time by 70%. I've watched conversion rates double. I've helped small staffing firms compete with corporate giants using nothing but smart AI implementation and the right data strategy.

But MIT just published research that made me stop and think differently about how we're deploying these tools.

The study found something unexpected: people using ChatGPT for essay writing showed significantly less brain activity in areas responsible for cognitive processing compared to those working without AI assistance.

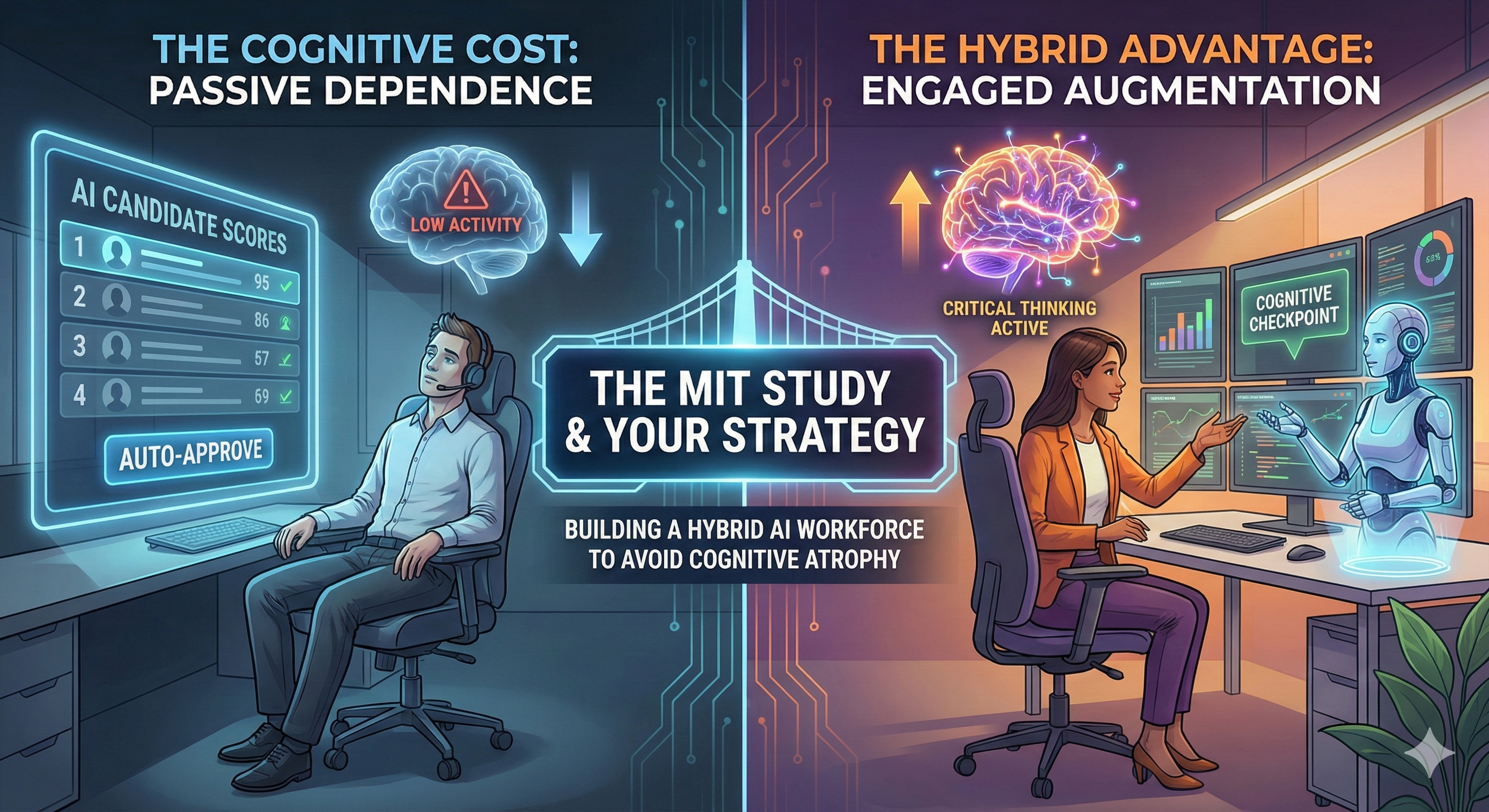

This isn't about AI being bad. This is about understanding what happens when we hand over thinking to machines without a strategy for maintaining our cognitive edge.

What the Research Actually Shows

MIT researchers measured brain activity during writing tasks. The results were clear.

When participants used ChatGPT, their brains showed reduced activity in regions associated with deep cognitive processing. The AI handled the heavy lifting. The humans became editors and approvers.

The study also surveyed white-collar workers. The pattern repeated: high confidence in AI tools correlated with decreased critical thinking effort.

Even schoolchildren in the UK echoed this concern. They recognized their problem-solving skills were diminishing as they relied more heavily on AI assistance.

Here's what this means in practical terms:

AI boosts efficiency. That's undeniable. But it creates a risk of over-dependence that erodes the very skills that make humans valuable in the first place.

The Recruitment Parallel You Need to Understand

I've seen this pattern emerge in recruitment teams already.

A staffing manager implements an AI screening tool. Within weeks, the team stops reading resumes closely. They trust the algorithm's rankings. They skip the intuitive assessment that once caught red flags the system missed.

The tool made them faster. It also made them less sharp.

This is exactly what the MIT study predicts. When AI handles cognitive tasks, our brains adapt by doing less of that work. It's not laziness. It's neurological efficiency.

Your brain conserves energy by offloading tasks to reliable external systems. That's how we evolved to use tools. But in recruitment, that offloading can cost you the judgment that separates good hires from great ones.

Why the Hybrid AI Workforce Model Matters More Now

This research validates something I've been building toward for years: the Hybrid AI Workforce framework.

The framework isn't about using AI to replace human judgment. It's about using AI to augment human capabilities while keeping the cognitive muscle active.

Here's the distinction:

Pure AI automation handles the task and delivers the answer. Your brain disengages. Over time, you lose the ability to do that task without the AI.

Hybrid AI augmentation handles the repetitive grunt work and surfaces insights. Your brain stays engaged in analysis, pattern recognition, and decision-making. You get faster and sharper simultaneously.

The MIT study shows what happens when we choose automation over augmentation. We gain efficiency but sacrifice cognitive development.

In recruitment, that trade-off is dangerous.

The Three Cognitive Risks AI Creates in Recruitment

Based on the MIT findings and my experience deploying AI in staffing operations, I see three specific risks:

1. Pattern Recognition Atrophy

Experienced recruiters develop an instinct for candidate quality. They spot inconsistencies in work history. They notice communication patterns that signal culture fit or misalignment.

When AI pre-screens every candidate, recruiters stop developing this pattern recognition. They see only the candidates the algorithm approves. They never train their brains on the full spectrum of applicants.

Over time, they lose the ability to assess candidates independently. They become dependent on the AI's judgment.

2. Critical Thinking Decline

The MIT survey data showed a clear correlation: higher AI confidence meant lower critical thinking effort.

In recruitment, this manifests as accepting AI recommendations without questioning the underlying logic.

Why did the AI rank this candidate third? What factors weighted most heavily? Are those factors actually predictive of success in your specific environment?

When you stop asking these questions, you stop thinking critically about your hiring process. You optimize for what the AI measures, not necessarily what drives performance in your organization.

3. Problem-Solving Skill Erosion

The UK schoolchildren recognized this risk intuitively. When AI solves problems for you, you stop developing the ability to solve them yourself.

In recruitment, this shows up when systems fail or edge cases appear.

Your AI screening tool goes down. Can your team still identify qualified candidates manually? Or have they forgotten how to read resumes without algorithmic guidance?

A candidate doesn't fit standard profiles but has unique value. Can your recruiters recognize that value? Or do they dismiss anyone the AI flags as non-standard?

How I'm Adjusting My AI Implementation Strategy

The MIT research doesn't change my belief in AI's transformative power. It refines how I deploy it.

Here's what I'm doing differently:

Building Cognitive Checkpoints

I'm designing AI systems that require human cognitive engagement at strategic points.

The AI surfaces insights and recommendations. But the human must articulate why they agree or disagree before moving forward.

This forces the brain to stay active in analysis rather than defaulting to approval mode.

In practice: Your AI ranks candidates. Before you act on those rankings, you document three factors that support or challenge the AI's assessment. This keeps your pattern recognition sharp.

Rotating AI Dependency

I'm implementing periodic "manual mode" exercises where teams complete tasks without AI assistance.

This isn't about rejecting technology. It's about maintaining the cognitive skills that make you valuable when the technology fails or faces novel situations.

In practice: Once monthly, your team screens a batch of candidates manually. You compare your assessments to what the AI would have recommended. You identify where human judgment added value the algorithm missed.

Teaching AI Literacy, Not Just AI Usage

Understanding how AI makes decisions is different from using AI to make decisions.

I'm training teams to understand the logic behind AI recommendations. What data does it weigh? What patterns does it recognize? Where are its blind spots?

This knowledge keeps your brain engaged in critical analysis rather than passive acceptance.

In practice: When implementing a new AI tool, you spend time understanding its training data, its algorithmic approach, and its known limitations. You document scenarios where human judgment should override AI recommendations.

The Data Architecture That Supports Cognitive Engagement

The MIT study highlights a risk. But it also points toward a solution.

The problem isn't AI assistance. The problem is AI assistance that removes humans from cognitive engagement.

This is where data architecture becomes critical.

When you build recruitment systems that capture data throughout the employee lifecycle, you create feedback loops that keep humans cognitively engaged.

You're not just accepting AI recommendations. You're analyzing whether those recommendations led to successful hires. You're identifying patterns the AI missed. You're refining the system based on real outcomes.

This is the foundation of the Hybrid AI Workforce model: AI handles scale and speed, humans handle insight and adaptation.

Your AI screens 1,000 applicants and identifies the top 50. Your team analyzes those 50 and documents why they hired candidate 7 instead of candidate 3. That documentation feeds back into the system, improving future recommendations while keeping your team's analytical skills sharp.

What This Means for Small and Mid-Sized Staffing Firms

You have an advantage here.

Large corporations often deploy AI as pure automation. They want to eliminate human touchpoints entirely. Scale demands it.

You can deploy AI as augmentation. You can maintain the human cognitive engagement that creates better outcomes while still gaining efficiency.

This is how you compete: not by replacing your recruiters with AI, but by making your recruiters more effective through strategic AI augmentation that keeps their skills sharp.

The MIT research shows that pure automation creates cognitive decline. Your competitive advantage is building systems that enhance cognitive capability instead.

The Questions You Should Ask About Your Current AI Tools

If you're already using AI in recruitment, evaluate it through this lens:

Does this tool require cognitive engagement, or does it just deliver answers?

Are my recruiters getting sharper or more dependent?

Can my team still perform effectively if the AI system fails?

Do we understand why the AI makes its recommendations?

Are we capturing data that lets us evaluate AI accuracy over time?

These questions determine whether you're building cognitive capability or eroding it.

The Future I'm Building Toward

The MIT study is a warning, not a condemnation.

AI will continue transforming recruitment. The firms that thrive will be those that deploy it strategically, maintaining human cognitive engagement while gaining efficiency.

This is the essence of the Hybrid AI Workforce: technology that makes humans more capable, not less necessary.

I'm building systems where AI handles repetitive tasks and surfaces insights, but humans remain actively engaged in analysis, pattern recognition, and decision-making.

The goal isn't to replace thinking. The goal is to elevate it.

Your recruiters shouldn't spend time manually screening 500 resumes. That's cognitive waste. AI handles that.

But they should spend time understanding why certain candidates succeed in your environment, recognizing patterns the AI hasn't learned yet, and making judgment calls on edge cases where algorithmic thinking falls short.

That's where human cognitive capability creates competitive advantage.

Moving Forward With Eyes Open

The MIT research gives us valuable data about AI's cognitive impact.

The question isn't whether to use AI in recruitment. That ship has sailed. The question is how to use it in ways that enhance rather than diminish human capability.

This requires intentional design. You need systems that keep cognitive muscles active while handling the grunt work that bogs down productivity.

You need data architecture that creates feedback loops requiring human analysis and insight.

You need training that builds AI literacy, not just AI usage.

This is the strategic advantage available to staffing firms willing to think beyond pure automation.

The firms that win won't be those with the most advanced AI. They'll be those that deploy AI in ways that make their human teams sharper, faster, and more insightful.

That's the future I'm building. That's the competitive edge the Hybrid AI Workforce model creates.

The MIT study confirms what I've been seeing in practice: AI changes how our brains work. The question is whether we're designing systems that change them for the better.