The AI Honeymoon Is Over: Why 2026 Will Force Us to Rebuild Everything

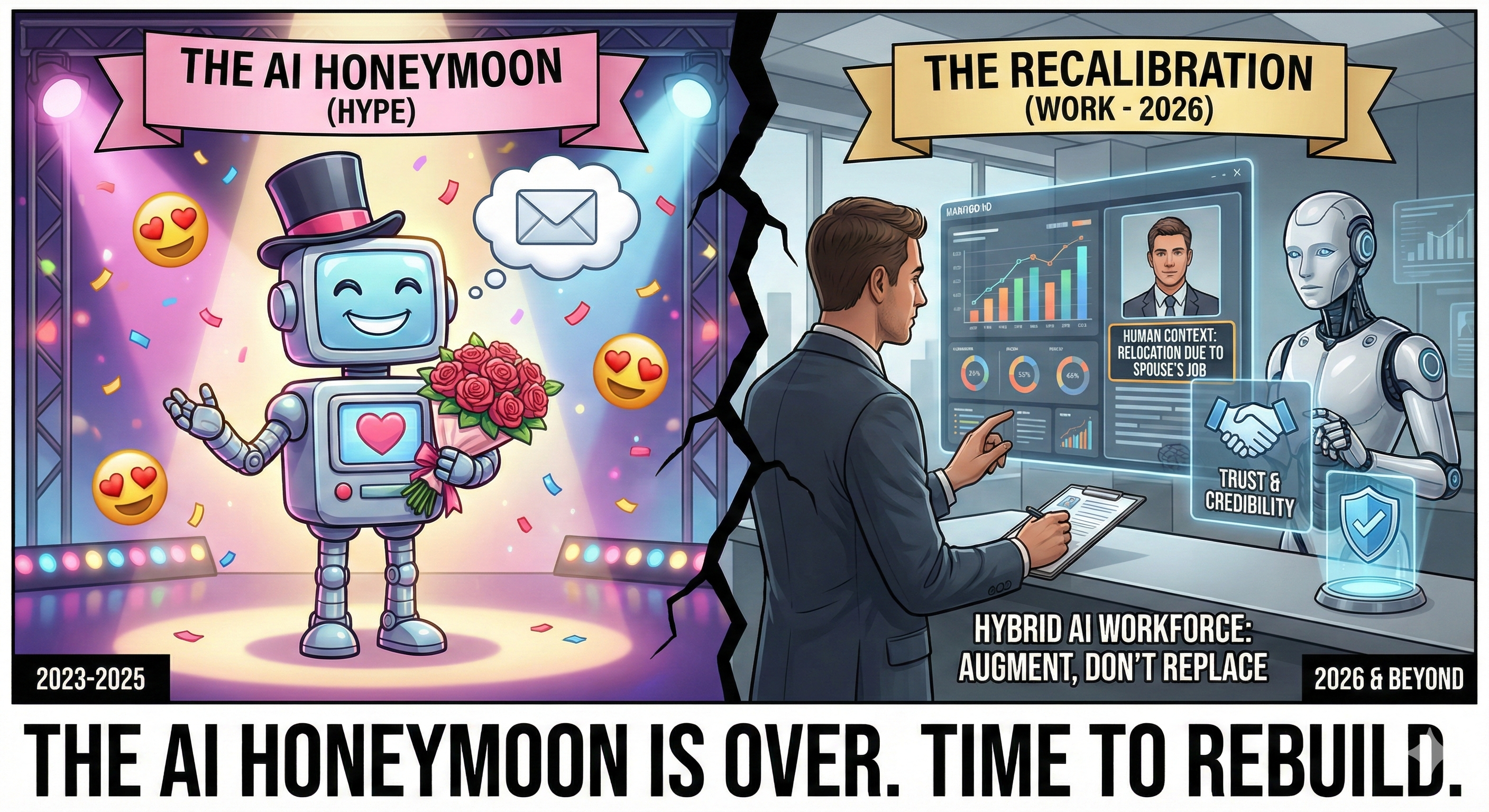

I'm calling it now: 2026 will be the year AI stops being magic and starts being work.

The honeymoon phase is ending. The breathless excitement about generative AI is giving way to something more honest—fatigue. Not because the technology failed, but because we finally understand what it can't do.

And what it can't do matters more than what it can.

The Cracks Are Already Showing

Here's what I've watched unfold over the past 18 months: businesses rush to implement AI, only to hit a wall when the systems can't grasp the messy, nuanced reality of how humans actually work.

Generative AI is brilliant at patterns. It's terrible at people.

You ask it to draft an email, and it nails the structure. But ask it to understand why your candidate ghosted you after three interviews, or why your top performer suddenly disengaged? It guesses. It infers. It generates something that sounds right but misses the human truth underneath.

This isn't a minor limitation. It's the limitation that will define the next phase of AI evolution.

Healthcare is the canary in the coal mine here. AI assistants in medical settings struggle with empathy—not because they lack data, but because empathy isn't a data problem. It's a human problem. You can't train a model to care. You can only train it to simulate caring, and patients know the difference.

The same dynamic plays out in recruitment, sales, customer support—any domain where understanding context, reading between the lines, and responding to emotional cues determines success.

Why Projects Are Stalling

I've talked to dozens of business leaders who started AI initiatives with genuine excitement. They're now stuck.

The reason? AI doesn't understand their workflow.

Your recruitment process isn't linear. Your sales cycle shifts based on market conditions, client mood, internal politics, and a hundred other variables that don't fit neatly into training data. Your business priorities change weekly, sometimes daily.

Generative AI needs you to explain everything. It waits for prompts. It requires constant course correction. It's like hiring someone brilliant who has zero institutional knowledge and needs hand-holding through every task.

That's not automation. That's overhead.

The promise was that AI would free us from repetitive work. The reality is that managing AI has become its own form of repetitive work. You're not eliminating tasks—you're trading one set of tasks for another.

And the ROI isn't materializing the way everyone hoped.

The Recalibration Is Coming

2026 will force a reckoning. Not a rejection of AI, but a recalibration of what we expect from it and how we build it.

The next generation of AI needs to solve for the things generative models can't: consistency, credibility, trust, and emotional intelligence.

Here's what that looks like in practice:

Consistency means the system delivers the same quality output regardless of who's using it or when. No more "it worked yesterday but today it's giving me garbage." Reliability becomes the baseline, not the exception.

Credibility means the AI can explain its reasoning in ways that make sense to humans. Not black-box outputs that you have to trust blindly, but transparent logic that you can audit, challenge, and refine.

Trust means the system operates within boundaries you set and respects the context you provide. It doesn't hallucinate facts or confidently present fiction as truth. It knows when it doesn't know.

Emotional intelligence means the AI can interpret tone, recognize frustration, detect urgency, and respond appropriately. Not with synthetic empathy, but with genuine understanding of what the situation requires.

This isn't about making AI more human. It's about making AI more contextually aware.

Data as Individuals, Not Inputs

The article I read suggested AI needs to treat data as individuals rather than inputs. I think that's exactly right, but it's harder than it sounds.

Right now, AI sees your candidate database as a collection of attributes: skills, experience, location, salary expectations. It optimizes for matches based on those attributes.

But people aren't collections of attributes. They're stories. They have motivations, fears, aspirations, and circumstances that don't show up in a resume.

The AI that wins in 2026 and beyond will be the AI that can infer those stories from limited data—not by guessing, but by recognizing patterns in behavior, communication style, decision-making, and engagement.

This requires a fundamentally different architecture. Not just better models, but hybrid systems that combine AI's analytical power with human intuition and oversight.

Which, by the way, is exactly what I've been building with the Hybrid AI Workforce framework. Not because I predicted this moment, but because I lived through the limitations firsthand and built systems that work around them.

What This Means for Recruitment

If you're in recruiting or staffing, this recalibration hits you harder than most industries.

Your entire value proposition is built on understanding people—what motivates them, what they need, what will make them say yes to your offer and stay with your client long-term.

Generative AI can help you write better job descriptions. It can screen resumes faster. It can even predict which candidates are most likely to respond to outreach.

But it can't replace the recruiter who knows that a candidate's hesitation isn't about salary—it's about their spouse's job situation. Or that a client's vague feedback means they don't actually know what they want yet.

The firms that thrive in the next phase won't be the ones that replace recruiters with AI. They'll be the ones that augment recruiters with AI in ways that amplify their human judgment rather than bypassing it.

That's the Hybrid AI Workforce model. Human intelligence sets strategy, interprets nuance, and makes final decisions. AI handles data processing, pattern recognition, and execution at scale.

You don't eliminate the human. You elevate them.

The Uncomfortable Truth About AI Fatigue

The fatigue people are feeling isn't about AI being bad. It's about AI being oversold.

We were told it would revolutionize everything overnight. We were promised autonomous systems that would run themselves. We were led to believe that prompts were the new programming and anyone could build anything.

None of that was true. Or rather, it was true in narrow, controlled scenarios but fell apart in messy, real-world applications.

The backlash is inevitable. We're already seeing it. The hype cycle is cresting, and the trough of disillusionment is coming fast.

But here's what most people will miss: the technology isn't the problem. The expectations were.

AI was never going to replace human judgment. It was always going to be a tool that amplifies it. The companies that understood this from the beginning are the ones that built sustainable systems. The ones that chased the hype are the ones hitting walls now.

What You Should Do Right Now

If you're running a recruiting firm, a staffing company, or any business that depends on understanding people, here's my advice:

Stop chasing the latest AI tool. Start building systems that integrate AI into your existing workflow in ways that make sense for your team.

Prioritize consistency over novelty. The AI that works every time is worth more than the AI that's impressive once.

Invest in data infrastructure. AI is only as good as the data you feed it. If your data is messy, incomplete, or siloed, no amount of AI sophistication will fix that.

Train your team to work with AI, not for AI. The goal isn't to make your recruiters into prompt engineers. It's to give them tools that handle the repetitive work so they can focus on the high-value human interactions.

Build hybrid systems from the start. Don't try to automate everything. Identify the tasks where AI excels, the tasks where humans excel, and design workflows that leverage both.

This isn't about waiting for better AI. It's about using today's AI smarter.

The Real Revolution Starts After the Hype

2026 won't be the year AI dies. It will be the year AI grows up.

The recalibration will separate the businesses that built on hype from the businesses that built on fundamentals. The ones that survive will be the ones that understood AI was never about replacing humans—it was about giving humans superpowers.

The next phase of AI will be quieter, less flashy, and far more useful. It will focus on reliability over novelty, on trust over speed, on understanding over output.

And the businesses that embrace this shift—the ones that build hybrid systems, invest in data infrastructure, and prioritize human-AI collaboration—will dominate their markets while everyone else is still trying to figure out why their chatbot stopped working.

I've spent the last five years building these systems in one of the toughest recruiting markets in the country—truck driver acquisition in transportation and logistics. If AI can work there, it can work anywhere.

The question isn't whether AI will transform your business. It's whether you'll build systems that last beyond the hype cycle.

Because the honeymoon is over. The real work is just beginning.

And the companies that treat this as an opportunity rather than a disappointment will be the ones writing the next chapter of their industry.