The 7 Best Practices for Building AI-Ready HR Teams That Actually Work

I've spent the last five years watching companies stumble through AI adoption in recruitment and HR.

The pattern is predictable: They buy the tools, attend the webinars, and then wonder why nothing changes.

The missing piece isn't technology. It's the people who know how to use it.

According to Sapient Insights Group's 2025 survey, 31% of organizations now use AI in HR. That number will double by next year. But adoption without strategy creates chaos, not competitive advantage.

Here's what I've learned from implementing AI systems in recruiting and staffing: You need specific roles, specific skills, and specific frameworks to make AI work for your business.

This isn't theory. These are the best practices I've tested in real organizations facing real hiring challenges.

Create the AI Governance and Risk Lead Role First

Before you deploy a single AI tool, you need someone responsible for how it gets used.

The AI Governance and Risk Lead ensures your AI systems operate safely, fairly, and transparently. This person prevents bias from creeping into your hiring decisions and keeps you compliant with evolving regulations.

This role matters because AI systems learn from data. If your historical hiring data contains bias, your AI will amplify it. I've seen companies accidentally filter out qualified candidates because their AI learned patterns from past decisions that favored certain demographics.

Your AI Governance Lead should:

Audit AI tools for bias before deployment

Monitor AI decision patterns for fairness issues

Document AI usage for compliance purposes

Train teams on ethical AI interaction

Create protocols for AI system failures

This person sits between your HR team and your technology. They translate business needs into technical requirements and technical capabilities into business outcomes.

Without this role, you're flying blind. With it, you build trust in your AI systems from day one.

Develop Data Literacy Across Your Entire HR Team

AI runs on data. Your HR team needs to understand what data means, where it comes from, and how to use it.

Data literacy isn't about becoming a data scientist. It's about asking the right questions when you look at AI-generated insights.

I've watched HR professionals ignore valuable AI recommendations because they didn't understand the data behind them. I've also seen them follow bad recommendations for the same reason.

Your team needs to know:

How to interpret AI confidence scores

What data quality looks like

When sample sizes are too small to trust

How to spot correlation versus causation

Which metrics actually predict success

Start with basic training on your applicant tracking system reports. Move to understanding candidate scoring algorithms. Eventually, your team should question AI outputs with the same critical thinking they apply to human recommendations.

This skill separates teams that use AI from teams that get results from AI.

Build Analytics Capabilities That Go Beyond Reporting

Most HR teams can pull reports. Few can analyze them.

The difference matters when you're using AI to make hiring decisions.

Analytics capabilities mean understanding trends, identifying patterns, and predicting outcomes. Robert Half's 2026 salary report shows higher compensation for roles requiring data analytics and business intelligence skills because these capabilities drive business value.

Your HR team needs people who can:

Identify which recruiting channels produce the best candidates

Predict which candidates will accept offers

Calculate the true cost of a bad hire

Measure the impact of process changes

Build dashboards that surface actionable insights

I use analytics to track every stage of the candidate journey. This data feeds my AI systems, which then predict which candidates are most likely to succeed in specific roles.

The cycle looks like this: Collect data, analyze patterns, train AI models, test predictions, refine based on outcomes.

Without strong analytics capabilities, you can't complete this cycle. You're just collecting data without extracting value.

Train Your Team on Large-Language Model Prompt Engineering

AI tools respond to how you ask questions.

The same AI system produces drastically different results based on prompt quality. I've seen recruiters get generic candidate summaries and detailed behavioral assessments from the same AI tool simply by changing how they phrase their requests.

Prompt engineering is the skill of communicating effectively with AI systems.

Your team should learn to:

Structure prompts with context, task, and desired format

Iterate on prompts based on output quality

Use examples to guide AI responses

Set constraints that prevent unhelpful outputs

Chain prompts together for complex tasks

For example, instead of asking AI to "summarize this resume," a well-trained recruiter asks: "Analyze this resume for a senior logistics coordinator role. Focus on experience with fleet management software, team leadership, and DOT compliance. Provide specific examples from their work history that demonstrate these capabilities."

The second prompt produces actionable insights. The first produces generic fluff.

This skill costs nothing to develop but multiplies the value of every AI tool you deploy.

Redesign Workflows Before You Automate Them

AI doesn't fix broken processes. It accelerates them.

If your current hiring workflow has bottlenecks, redundancies, or unnecessary steps, automating it with AI just creates faster chaos.

I learned this the hard way. Early in my AI implementation journey, I automated our candidate screening process without questioning whether our screening criteria made sense. We processed candidates faster, but we still hired the wrong people.

Workflow redesign means:

Mapping every step in your current process

Identifying which steps add value

Eliminating steps that exist only because "we've always done it that way"

Determining which tasks AI should handle

Defining clear handoffs between AI and humans

Your redesigned workflow should leverage AI for repetitive, data-heavy tasks while keeping humans focused on relationship building, nuanced decision-making, and strategic thinking.

In my Hybrid AI Workforce framework, AI handles initial candidate outreach, resume screening, and scheduling. Humans handle phone screens, interviews, and final hiring decisions.

This division plays to the strengths of both. AI provides scale and consistency. Humans provide judgment and empathy.

Establish Clear Protocols for Human-AI Collaboration

AI augments human capabilities. It doesn't replace them.

Your team needs clear guidelines on when to trust AI recommendations and when to override them.

I've seen two dangerous extremes: Teams that ignore AI insights because they don't trust technology, and teams that follow AI recommendations blindly because they assume the algorithm knows best.

Both approaches waste the potential of AI systems.

Your protocols should define:

Which decisions AI can make independently

Which decisions require human review

How to escalate when AI and human assessments conflict

When to update AI models based on human feedback

How to document decisions for continuous improvement

For example, in my recruiting systems, AI can automatically reject candidates who don't meet minimum qualifications. But any candidate who meets the threshold gets human review before rejection.

This balance maintains efficiency without sacrificing judgment.

Your team should feel empowered to question AI outputs. The best AI systems improve when humans provide feedback on edge cases and unexpected scenarios.

Invest in Continuous Learning and Adaptation

AI technology evolves faster than traditional business tools.

The AI capabilities available today will look primitive in 18 months. Your team needs a learning mindset, not just a training program.

I allocate time every week for my team to experiment with new AI tools and share discoveries. This isn't formal training. It's structured exploration.

Your continuous learning approach should include:

Regular reviews of new AI tools in your industry

Testing periods for promising technologies

Internal knowledge sharing sessions

Partnerships with AI vendors for early access

Documentation of what works and what doesn't

The companies winning with AI aren't necessarily the ones with the biggest budgets. They're the ones with the fastest learning cycles.

You test a new approach, measure results, keep what works, and discard what doesn't. Then you repeat.

This requires psychological safety. Your team needs permission to experiment and fail without career consequences.

The alternative is stagnation. You implement AI once, then watch competitors pass you as technology advances.

The Reality of AI-Ready HR Teams

Building an AI-ready HR team isn't about replacing people with technology.

It's about equipping people with capabilities that multiply their impact.

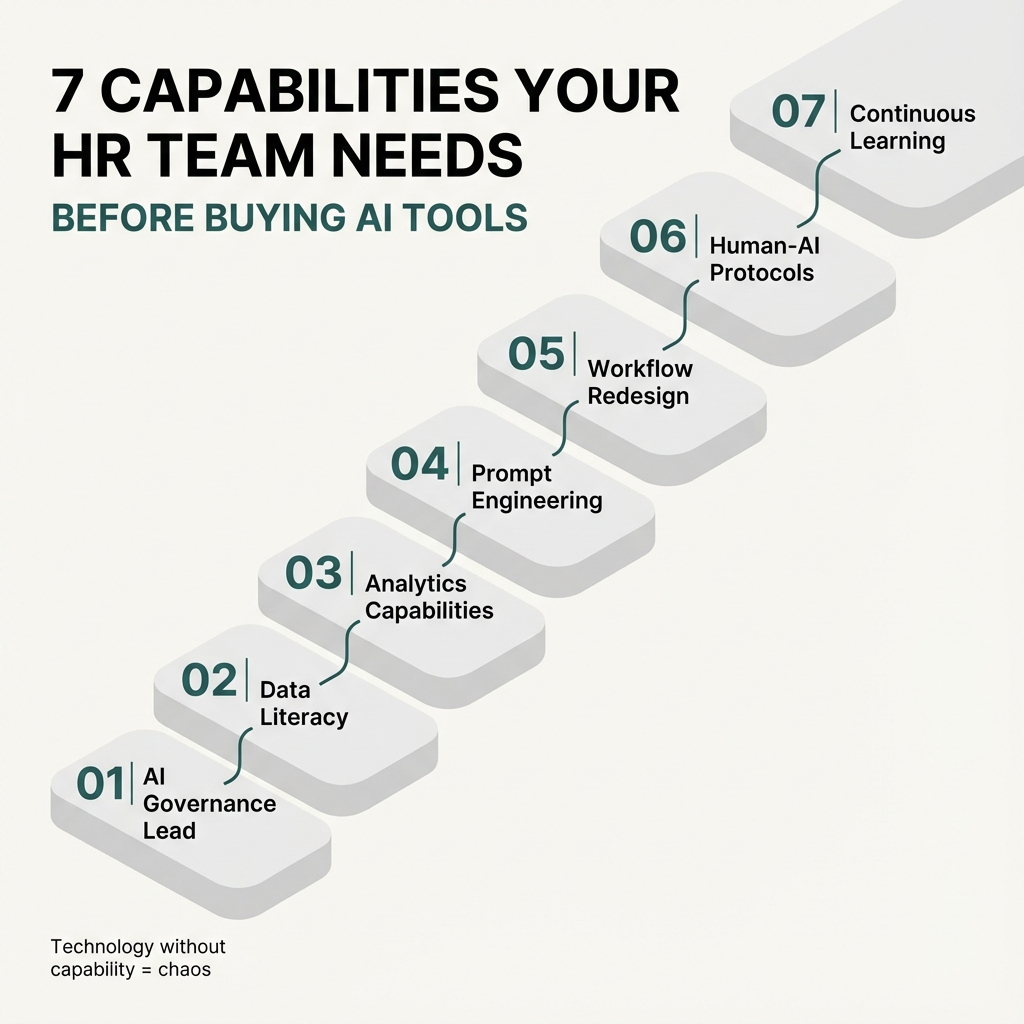

The seven best practices I've outlined—governance leadership, data literacy, analytics capabilities, prompt engineering, workflow redesign, collaboration protocols, and continuous learning—create the foundation for sustainable AI adoption.

Small and mid-sized businesses have an advantage here. You can implement these practices faster than large enterprises. You can experiment without layers of approval. You can adapt quickly when something doesn't work.

The Hybrid AI Workforce approach I've developed over the past five years proves that you don't need Fortune 500 resources to compete with Fortune 500 capabilities.

You need the right people with the right skills using the right frameworks.

Start with one role. Build one capability. Redesign one workflow.

Then measure what changes.

The companies that master these best practices won't just adopt AI. They'll use it to dominate their markets while their competitors are still figuring out which tools to buy.

That's the difference between following trends and setting them.